|

If (len(datay) > len(p0)) and pcov is not None: Optimize.leastsq(errfunc, p0, args=(datax, datay), \ Pfit, pcov, infodict, errmsg, success = \ Now, let's fit the function using the various methods available: `optimize.leastsq` def fit_leastsq(p0, datax, datay, function):Įrrfunc = lambda p, x, y: function(x,p) - y Yvals_err = np.random.normal(0., err_stdev, len(xdata)) # (the noise-less function that underlies the data is shown as a blue line) We will generate a dataset with a small random error. Let's define a squiggly line function and generate some data with random errors. Let's see some examplesįirst, some boilerplate code. In my opinion, the best way to deal with a complicated f(x) is to use the bootstrap method, which is outlined in this link. The results of the covariance matrix, as implemented by optimize.curvefit and optimize.leastsq actually rely on assumptions regarding the probability distribution of the errors and the interactions between parameters interactions which may exist, depending on your specific fit function f(x). How can I be sure that my errors are correct?ĭetermining a proper estimate of the standard error in the fitted parameters is a complicated statistical problem. The returned covariance matrix `pcov` is based on *estimated*Įrrors in the data, and is not affected by the overall If False, `sigma` denotes relative weights of the data points. If None, the uncertainties are assumed to be 1. If not None, the uncertainties in the ydata array. From the documentation: sigma : None or M-length sequence, optional You then need to take the square root of the diagonal elements of the covariance matrix to get an estimate of the standard deviation of the fit parameters.įurthermore, optimize.curvefit provides optional parameters to deal with more general cases, where the yerr_i value is different for each data point. On the other hand, if you use optimize.curvefit, the first part of the above procedure (multiplying by the reduced chi squared) is done for you behind the scenes. I have included the code to do that in one of the functions below. the reduced chi squared) and taking the square root of the diagonal elements will give you an estimate of the standard deviation of the fit parameters. Multiplying all elements of this matrix by the residual variance (i.e. The optimize.leastsq method will return the fractional covariance matrix. Which fitting function to use, and how to obtain the parameter errors? In most physical measurements, the error yerr_i is a systematic uncertainty of the measuring device or procedure, and so it can be thought of as a constant that does not depend on i. Let's think about fitting a function y=f(x) for which you have a set of data points (x_i, y_i, yerr_i), where i is an index that runs over each of your data points.

Updated on Getting the correct errors in the fit parameters can be subtle in most cases. This will be confirmed when I can get the errors out.

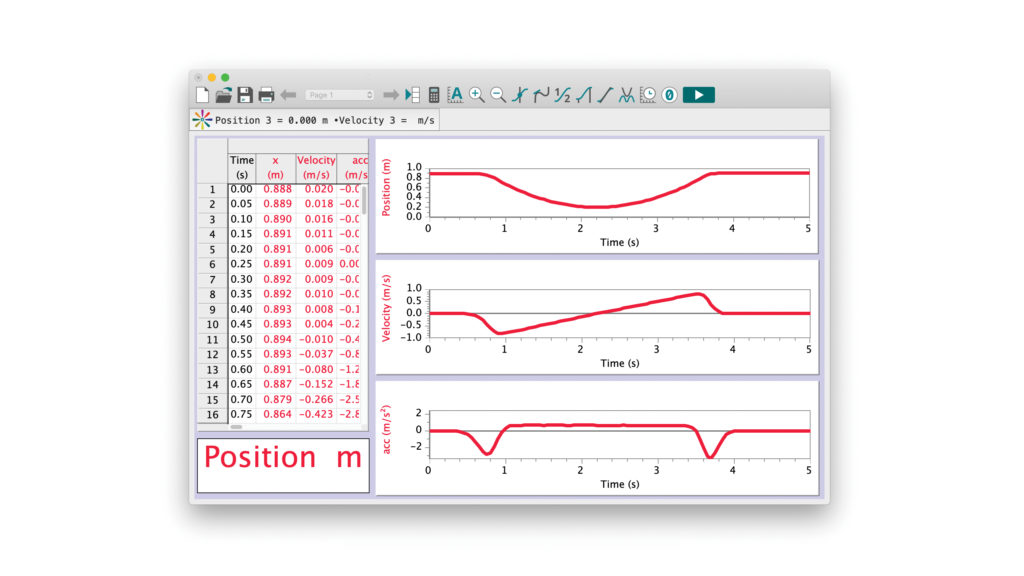

I suspect the fit is a little suspect anyway at the moment. The fitted values and the matrix provided by the output is as follows: I have imported everything needed at the tope of the code. The args t and disp is and array of time and displcement values (basically just 2 columns of data). Out = optimize.leastsq(errfunc, p0, args=(t, disp,), full_output=1) The fitting code is as follows: fitfunc = lambda p, t: p+p*np.log(t-p)+ p*t # Target function'Įrrfunc = lambda p, t, y: (fitfunc(p, t) - y)# Distance to the target function I am very new to python and therefore apologise if the question turns out to be a basic one. Is this correct? If not how would you go about getting the residual matrix to multiply the outputted Jacobian by to get my covariance matrix?Īny help would be greatly appreciated.

I have a vague memory of reading that the covariance matrix is what is output from the optimize.leastsq method anyway. Unfortunately I am not a statistician so I am drowning somewhat in the terminology.įrom what I understand all I need is the covariance matrix that goes with my fitted parameters, so I can square root the diagonal elements to get my standard error on the fitted parameters. Looking through the documentation the matrix outputted is the jacobian matrix, and I must multiply this by the residual matrix to get my values. I am now looking to get error values on the fitted parameters. I have a set of data (displacement vs time) which I have fitted to a couple of equations using the optimize.leastsq method.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed